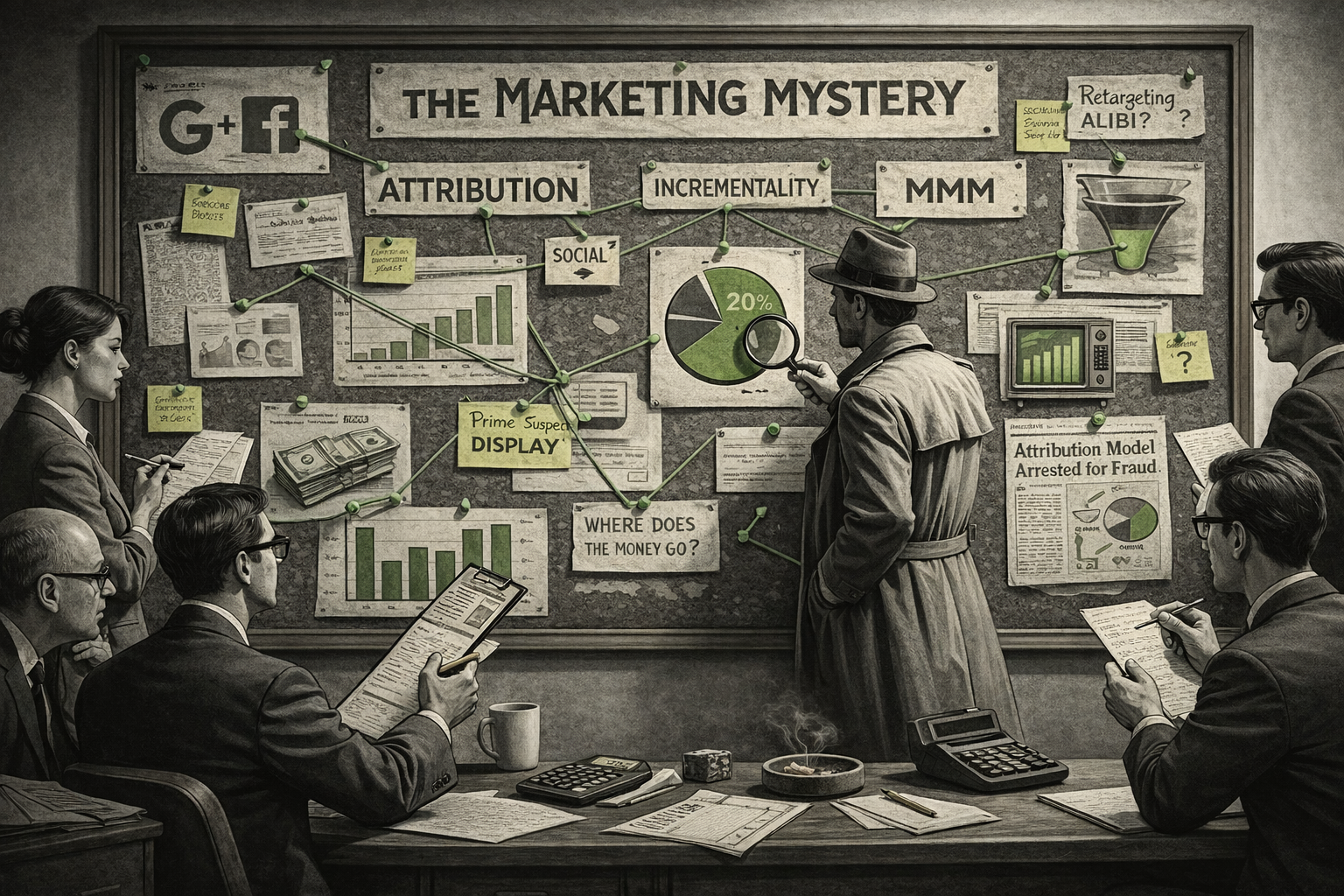

Our Guide to Marketing Attribution, Incrementality and MMM for 2026

At the heart of every ecommerce growth conversation sits one deceptively simple question:

Where should I put my paid media dollars to get the best return?

Attribution, incrementality, and MMM each offer a piece of the answer. Together, they help you understand what’s actually working across your channels.

In this guide, we break down all three approaches in plain language and show you how to use them in combination for smarter, more resilient decision-making in 2026.

Method 1: Marketing Channel Attribution

Attribution attempts to answer:

“If my total sales last month were $10,000 and I spent $2,000 on paid media, how much revenue can I attribute to paid and other channels?”

Attribution sounds simple, but in reality it has major limitations. Here are four examples:

1. Attribution data is incomplete or biased

Attribution data lives across several platforms, each with different tracking methods, different definitions of a “conversion,” and different incentives.

Using the wrong attribution model

There’s plenty written about attribution models, but very few marketers use them for the right purpose.

Last click

Most reporting still defaults to “last click.”

That means 100% of the credit goes to the channel the user clicked most recently before converting.

If the customer saw multiple ads, say video ads on Meta before later clicking a Google Search ad, the last click ignores everything except the final touch.

Last click is useful for understanding which channels close the sale, but not which channels create demand.

First click does the opposite: it highlights channels driving awareness and consideration, but ignores the closing channels.

Neither tells the full story.

Data-driven attribution

Data-driven models (such as Markov Chains or Shapley Values) try to solve this by asking:

“What is the probability of a sale if this channel didn’t exist?”

Platforms like GA4 and Google Ads now offer data-driven attribution.

But they still depend on the quality of your input data, and that’s where most problems begin.

2. Incomplete analytics data

Even a “perfectly” configured GA4 setup will still struggle with:

Consent banners removing tracking signals

Users switching devices

Cross-domain checkouts breaking cookies

Missing identifiers

Session stitching that isn’t always reliable

GA4’s data-driven model can spread credit across channels, but if the underlying user journey data is incomplete, the attribution will still be flawed.

The BigQuery GA4 export is invaluable for cleaning up the “eccentricities” within the raw data prior to attribution analyses being run. It allows for easier channel grouping, removal of direct sources, session stitching - all needed for better attribution reporting than within the standard GA4 interface.

3. Double counting from ad networks

Modern ad platforms use Conversion APIs (CAPI) to match conversions back to impressions or clicks.

These are very accurate for matching events to users—but the attribution rules are extremely generous to each ad platform.

For example, Meta’s common 1-day view / 28-day click window means Meta will take full credit if:

a user saw an ad within 1 day of converting, or

clicked an ad within 28 days

… regardless of what else the user did in between.

Google Ads does something similar as does Linkedin. This leads to overlapping credit, meaning you double count conversions across channels.

4. Attribution only tells you the past

Attribution reports are backward-looking.

They can tell you what happened last week or last month, but not necessarily what will happen next.

And when budgets, markets, seasonality, competition and algorithms all change continuously, yesterday’s attribution isn’t a perfect guide to tomorrow’s decisions.

This is where incrementality comes in.

Method 2: Incrementality

Incrementality became one of the buzzwords of 2025, with platforms promising to reveal the “real” value of your marketing.

But what is incrementality?

At its simplest, it answers two questions:

If I reduce spend in a channel, how much will my sales fall?

If I increase spend in a channel, how much will my sales increase?

Incrementality isolates the causal impact of a channel—what it is truly adding over and above what would have happened anyway.

It’s the difference between:

“Meta reports it drove 500 conversions”

versus“If I turned Meta ads off, I would lose 140 conversions.”

The second number is incrementality—and it’s what you should care about.

Why incrementality matters

Knowing incrementality means you can:

Shift budget to the highest-impact channels

Reduce waste in over-credited platforms

Forecast the impact of changes in spend

Understand where saturation points are

Differentiate between brand-driven sales and paid-driven sales

Incrementality is the bridge between attribution (what happened) and planning (what to do next).

Types of Incrementality Measurement

There are three practical approaches:

1. True Experiments (GEO tests or audience splits)

The gold standard.

You intentionally turn spend off (or down) in one region or group and keep everything else the same.

This is the most accurate but often disruptive and sometimes expensive.

2. Natural experiments using historical data

This is where statistical methods use past fluctuations to estimate cause and effect.

These are cheaper but rely heavily on the quality of your data and the stability of your market.

3. Platform-assisted incrementality tools

Google, Meta and third-party vendors now offer automated lift tests.

These are more accessible than custom experiments but still rely on the platform’s rules and bias.

Method 3: Marketing Mix Modelling (MMM)

Once a popular but unreliable buzzword, MMM is becoming increasingly popular again, especially with rising privacy restrictions and fewer reliable user-level signals.

The above sections deal with ecommerce as siloed cause (online ads) and effect (website sales). For most companies, understanding channel impact is far more complex.

That’s because advertising budgets may include television, radio, or sponsorships — all of which are hard to measure compared to digital. Further complicating matters are offline sales that come through bricks and mortar retailers and third party marketplaces such as Amazon. Understanding the origins of these sales can seem extremely difficult

The tendency we see in client organizations is to judge each channel’s performance in an isolated (siloed) way, mostly because it’s easier to do so. This leads to a bias towards investing in channels simply because they’re easier to measure. And, only judging them on the outcome of sales directly within the channel. The ideal is to measure marketing as a whole system.

Instead of the deterministic view of “someone clicked this ad then bought this product,” MMM seeks to answer media effectiveness questions through statistical inference. The combination effects and efficiency of all media bought can be assessed against sales through all channels.

MMM can statistically estimate:

The incremental impact of each channel

The saturation point of each channel

The diminishing returns curve

Seasonality and external factors (e.g., weather, holidays, market conditions)

The optimal budget allocation for the future

It doesn’t require cookies or user-level tracking, making it privacy-proof and robust for long-term planning.

However, MMM does require a lot of data to develop estimates effectively. Ad and sales data need to be broken down by time increment and geography. And years of data are needed to build a model with good predictive power.

The good news is that the recent breakthroughs in machine learning mean regression analyses can be run faster and more effectively.

MMM is mostly science, but with a liberal dash of art; expertise in R or Python are essential to build and validate the model.

How Attribution, Incrementality and MMM Fit Together

A modern marketer should think of these three tools as complementary:

Attribution: the micro view

Shows recent performance and touchpoint paths.

Good for optimization within a channel (keywords, audiences, creatives).

Incrementality: the forward view

Shows which channels truly drive growth.

Good for budget shifts and understanding cause and effect.

MMM: the macro view

Shows long-term, big-picture investment strategy.

Good for forecasting and planning the next quarter or year.

Together, they create a complete measurement system:

Attribution → what happened and what may happen in the future

Incrementality → which channels have increasing and diminishing return on ad spend

MMM → what the general ad spend levels should be per channel

Our Attribution Recommendation for 2026

Marketers need to rely on a measurement stack that blends:

Attribution to understand user journeys

Incrementality testing to understand causal impact

MMM to plan and optimize comprehensive cross channel budgets

No single approach gives the full picture, each fills a gap the others leave behind.

The brands who thrive will be those who combine these methods to answer the most important question:

“Where should my next $1 of marketing budget go?”